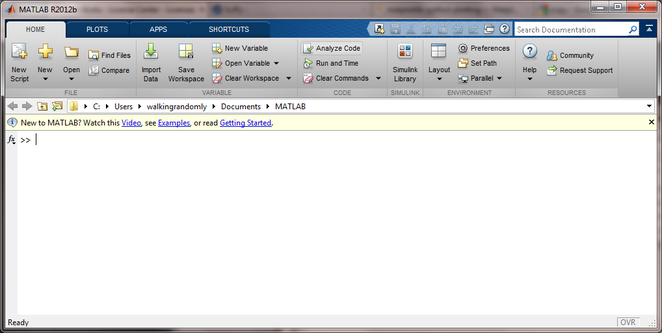

I felt like playing with Julia and MATLAB this Sunday morning. I found some code that prices European Options in MATLAB using Monte Carlo simulations over at computeraidedfinance.com and thought that I’d port this over to Julia. Here’s the original MATLAB code

function V = bench_CPU_European(numPaths) %Simple European steps = 250; r = (0.05); sigma = (0.4); T = (1); dt = T/(steps); K = (100); S = 100 * ones(numPaths,1); for i=1:steps rnd = randn(numPaths,1); S = S .* exp((r-0.5*sigma.^2)*dt + sigma*sqrt(dt)*rnd); end V = mean( exp(-r*T)*max(K-S,0) )

I ran this a couple of times to see what results I should be getting and how long it would take for 1 million paths:

tic;bench_CPU_European(1000000);toc V = 13.1596 Elapsed time is 6.035635 seconds. >> tic;bench_CPU_European(1000000);toc V = 13.1258 Elapsed time is 5.924104 seconds. >> tic;bench_CPU_European(1000000);toc V = 13.1479 Elapsed time is 5.936475 seconds.

The result varies because this is a stochastic process but we can see that it should be around 13.1 or so and takes around 6 seconds on my laptop. Since it’s Sunday morning, I am feeling lazy and have no intention of considering if this code is optimal or not right now. I’m just going to copy and paste it into a julia file and hack at the syntax until it becomes valid Julia code. The following seems to work

function bench_CPU_European(numPaths) steps = 250 r = 0.05 sigma = .4; T = 1; dt = T/(steps) K = 100; S = 100 * ones(numPaths,1); for i=1:steps rnd = randn(numPaths,1) S = S .* exp((r-0.5*sigma.^2)*dt + sigma*sqrt(dt)*rnd) end V = mean( exp(-r*T)*max(K-S,0) ) end

I ran this on Julia and got the following

julia> tic();bench_CPU_European(1000000);toc() elapsed time: 36.259000062942505 seconds 36.259000062942505 julia> bench_CPU_European(1000000) 13.114855104505445

The Julia code appears to be valid, it gives the correct result of 13.1 ish but at 36.25 seconds is around 6 times slower than the MATLAB version. The dog needs walking so I’m going to think about this another time but comments are welcome.

Update (9pm 7th October 2012): I’ve just tried this Julia code on the Linux partition of the same laptop and 1 million paths took 14 seconds or so:

tic();bench_CPU_European(1000000);toc() elapsed time: 14.146281957626343 seconds

I built this version of Julia from source and so it’s at the current bleeding edge (version 0.0.0+98589672.r65a1 Commit 65a1f3dedc (2012-10-07 06:40:18). The code is still slower than the MATLAB version but better than the older Windows build

Update: 13th October 2012

Over on the Julia mailing list, someone posted a faster version of this simulation in Julia

function bench_eu(numPaths)

steps = 250

r = 0.05

sigma = .4;

T = 1;

dt = T/(steps)

K = 100;

S = 100 * ones(numPaths,1);

t1 = (r-0.5*sigma.^2)*dt

t2 = sigma*sqrt(dt)

for i=1:steps

for j=1:numPaths

S[j] .*= exp(t1 + t2*randn())

end

end

V = mean( exp(-r*T)*max(K-S,0) )

end

On the Linux partition of my test machine, this got through 1000000 paths in 8.53 seconds, very close to the MATLAB speed:

julia> tic();bench_eu(1000000);toc() elapsed time: 8.534484148025513 seconds

It seems that, when using Julia, one needs to unlearn everything you’ve ever learned about vectorisation in MATLAB.

Update: 28th October 2012

Members of the Julia team have been improving the performance of the randn() function used in the above code (see here and here for details). Using the de-vectorised code above, execution time for 1 million paths in Julia is now down to 7.2 seconds on my machine on Linux. Still slower than the MATLAB 2012a implementation but it’s getting there. This was using Julia version 0.0.0+100403134.r0999 Commit 099936aec6 (2012-10-28 05:24:40)

tic();bench_eu(1000000);toc() elapsed time: 7.223690032958984 seconds 7.223690032958984

- Laptop model: Dell XPS L702X

- CPU:Intel Core i7-2630QM @2Ghz software overclockable to 2.9Ghz. 4 physical cores but total 8 virtual cores due to Hyperthreading.

- RAM: 8 Gb

- OS: Windows 7 Home Premium 64 bit and Ubuntu 12.04

- MATLAB: 2012a

- Julia: Original windows version was Version 0.0.0+94063912.r17f5, Commit 17f50ea4e0 (2012-08-15 22:30:58). Several versions used on Linux since, see text for details.