Archive for August, 2009

My latest toy is the HTC Hero which my phone provider, T-mobile, insists on calling the G2 touch. I’ve got quite a lot to say on this particular device and its operating system – Android – but I’ll leave that for a longer blog post in the future. For now I want to talk about a problem I discovered this morning when I plugged in some headphones for the first time – they simply didn’t work.

At first I thought the problem lay with my headphones so I didn’t worry too much. However, someone in my office also has a HTC Hero and he has some headphones that work fine on his phone but didn’t on mine. Oh dear!

So the question was ‘Is this software (which would be OK) or hardware (which wouldn’t). The practical upshot is that for me what worked was to switch my phone off completely by holding down the off button until the phone options menu pops up and selecting Power Off and then switching it on again. Once the reboot had completed the headphones worked like a charm.

Someone over at the forums at www.4winmobile.com also had an issue with the headphones and thought it was related to a process named com.htc.dcs but I can’t confirm this one way or the other because the process doesn’t currently show up in Taskiller on my phone and yet the headphones still work fine. I remember seeing it on the list earlier and the headphones worked fine then too and before you ask – no it hasn’t been added to the Taskiller ignore list as far as I can tell.

So, I’m not sure what is causing this headphone issue with the HTC Hero but the thing to bear in mind is that, for me at least, it is a software issue that can be fixed. The only thing we have to do now is make sure we can come up with a foolproof way of fixing it for everyone so let me know if the above worked for you.

Comments welcomed.

Update (27th August 2009): Since I wrote the above post I have become aware of the fact that is is a known issue in Android 1.5 and that a workaround has been developed. The workaround is called toggleheadset and has been reported to work for some people but personally I haven’t needed to use it.

It is not currently available in the Android Market put you can get the .apk file from the following URL

http://code.google.com/p/toggleheadset/

In order to install this .apk file you will need to ensure that you have allowed the installation of non-market applications first.

I stumbled across this website via one of my friend’s twitter feeds and it made me smile. Not everyone is impressed with Wolfram Alpha it seems :)

The Numerical Algorithms Group (NAG) produce some of the best mathematical libraries money can buy (and no…I don’t work for them – I’m just a very happy customer). Although they are written in C and Fortran it is possible to use them in almost any programming environment you care to mention – I’ve personally used them with both Python and Visual Basic for example as well as making extensive use of their toolbox for MATLAB.

More recently, Anna Kwiczala of NAG has written an article demonstrating how to use them in the free, open source MATLAB-like package, Octave. Head over there to check it out.

Let me know if you are a user of the NAG library as I’d quite like to swap notes.

One of the things I love most about blogging is the interaction with readers via the comments section. Put simply you guys know your onions and although I (hopefully) have something useful and/or interesting to tell you, there is a heck of a lot of stuff that you could tell me.

In the comments section of one of my recent posts, Sander Huisman, did exactly that when he suggested that I shouldn’t always use Table in Mathematica when generating lists. This was news to me – I thought that Table was the accepted and possibly the fastest way to do it in Mathematica but it seems not. Here is a concrete example as provided by Sander.

AbsoluteTiming[Table[PrimeQ[i], {i, 0, 10000000}];]

AbsoluteTiming[PrimeQ /@ Range[0, 10000000];]

Both of these Mathematica expressions test each of the first 10000000 integers (including 0) to see if they are prime and what you end up with is a list of Booleans – {False, False, True, True, False, True, False, True, False, False} and so on. The difference is that the first expression is noticeably slower than the second.

If, like me, you struggle to remember what the punctuation operators (/. /@ etc) actually do in Mathematica then know that foo /@ bar is equivalent to Map[foo,bar] where foo is a funbction and bar is a list.

On one of my test machines, the first expression evaluated in 10.012 seconds on average whereas the second evaluated in 8.28 seconds on average (both averages are over 100 runs).

So Map is faster than Table right?

Maybe, maybe not. Try the following examples (again provided by Sander) where we generate a list of i+2 for i between 1 and 10^7.

AbsoluteTiming[ Table[i + 2, {i, 10^7}];]

AbsoluteTiming[# + 2 & /@ Range[10^7];]

For this calculation Table did it in 0.66 seconds and /@ (or Map) did it in 0.96 seconds (both averaged over 100 runs). As Sander says ‘Sometimes it is worth looking in to the way you make a list!’.

Comments welcomed.

Maplesoft, developers of the popular computer algebra system – Maple, have been bought by Cybernet Systems Co., Ltd., a Tokyo-based major Japanese importer/distributor of CAE (computer aided engineering) software. More details here.

I just hope that this turns out to be a good thing. I like Maple and I don’t want it messed up!

I often get sent a piece of code written in something like MATLAB or Mathematica and get asked ‘how can I make this faster?‘. I have no doubt that some of you are thinking that the correct answer is something like ‘completely rewrite it in Fortran or C‘ and if you are then I can only assume that you have never been face to face with a harassed, sleep-deprived researcher who has been sleeping in the lab for 7 days straight in order to get some results prepared for an upcoming conference.

Telling such a person that they should throw their painstakingly put-together piece of code away and learn a considerably more difficult language in order to rewrite the whole thing is unhelpful at best and likely to result in the loss of an eye at worst. I have found that a MUCH better course of action is to profile the code, find out where the slow bit is and then do what you can with that. Often you can get very big speedups in return for only a modest amount of work and as a bonus you get to keep the use of both of your eyes.

Recently I had an email from someone who had profiled her MATLAB code and had found that it was spending a lot of time in the interp1 function. Would it be possible to use the NAG toolbox for MATLAB (which hooks into NAG’s superfast Fortran library) to get her results faster she wondered? Between the two of us and the NAG technical support team we eventually discovered that the answer was a very definite yes.

Rather than use her actual code, which is rather complicated and domain-specific, let’s take a look at a highly simplified version of her problem. Let’s say that you have the following data-set

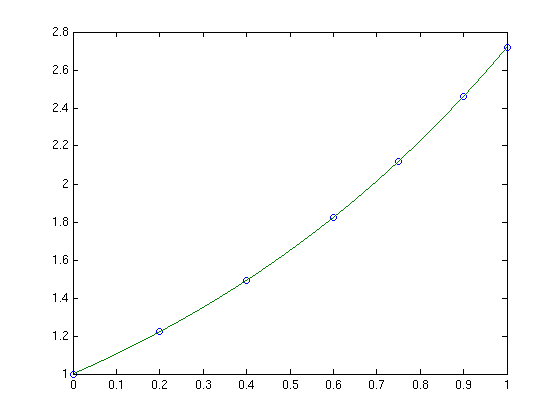

x = [0;0.2;0.4;0.6;0.75;0.9;1]; y = [1;1.22140275816017;1.49182469764127;1.822118800390509;2.117000016612675;2.45960311115695; 2.718281828459045];

and you want to interpolate this data on a fine grid between x=0 and x=1 using piecewise cubic Hermite interpolation. One way of doing this in MATLAB is to use the interp1 function as follows

tic t=0:0.00005:1; y1 = interp1(x,y,t,'pchip'); toc

The tic and toc statements are there simply to provide timing information and on my system the above code took 0.503 seconds. We can do exactly the same calculation using the NAG toolbox for MATLAB:

tic [d,ifail] = e01be(x,y); [y2, ifail] = e01bf(x,y,d,t); toc

which took 0.054958 seconds on my system making it around 9 times faster than the pure MATLAB solution – not bad at all for such a tiny amount of work. Of course y1 and y2 are identical as the following call to norm demonstrates

norm(y1-y2)

ans =

0

Of course the real code contained a lot more than just a call to interp1 but using the above technique decreased the run time of the user’s application from 1 hour 10 minutes down to only 26 minutes.

Thanks to the NAG technical support team for their assistance with this particular problem and to the postgraduate student who came up with the idea in the first place.